I use GenAI as part of production pipelines, not as a one-click output. My focus is control and repeatability: reference-driven generation, consistency checks, and integration into compositing and editing so results remain art-directable and deliverable.

AI Filmmaking & Generative Video

I studied generative AI filmmaking at NYU Tisch School of the Arts as part of the MPS in Virtual Production program, where Runway’s AI video tools were integrated into coursework at the Martin Scorsese Virtual Production Center.

Through hands-on projects and thesis development, I explored how AI can be used for concept development, previsualization, and experimental storytelling, under the guidance of faculty working closely with emerging AI filmmaking pipelines.

Read more about the NYU x Runway AI filmmaking course: No Film School article

ZINE: MARS, BABY

Concept Visualization / Pre-Production

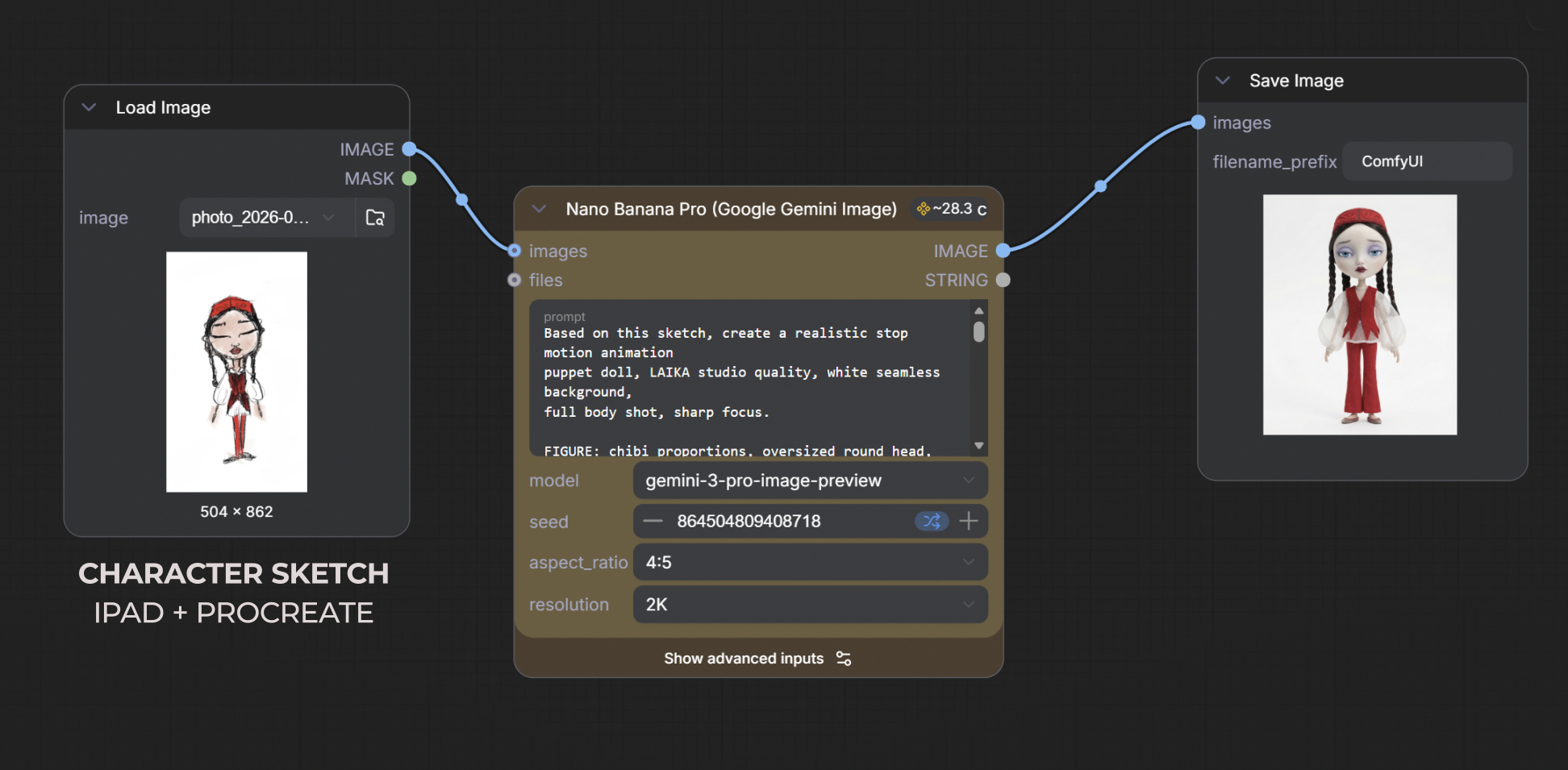

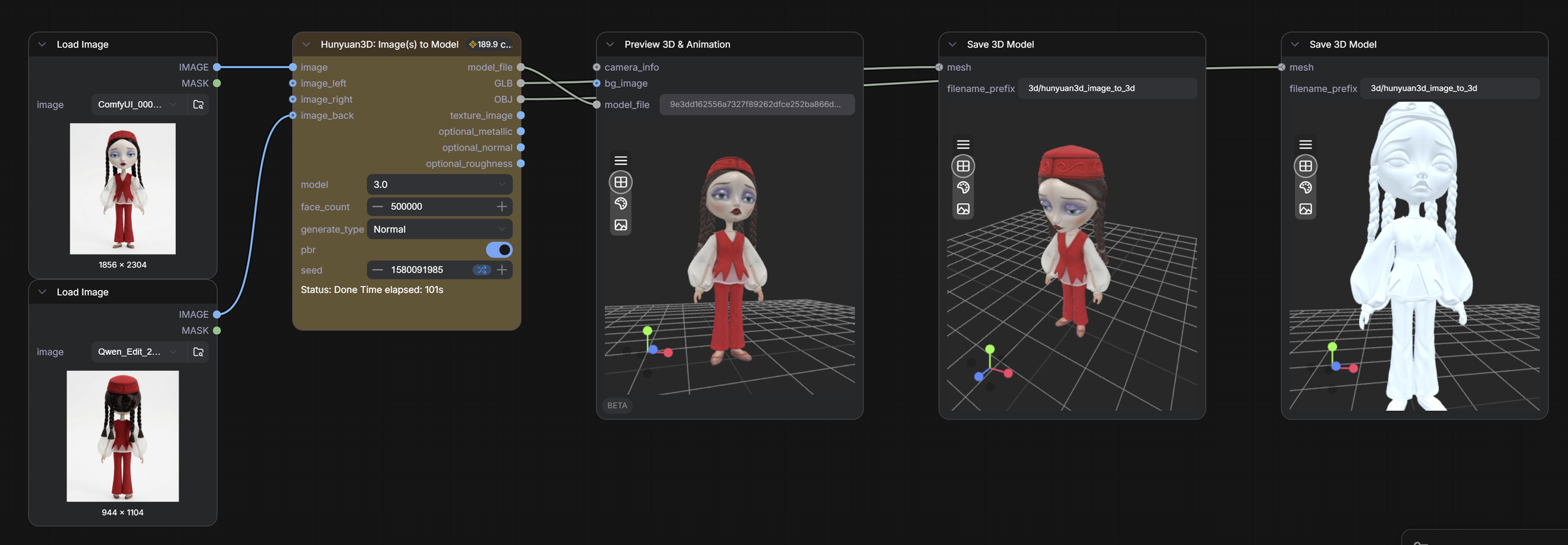

Concept visualization before a single puppet is built. This workflow takes a hand-drawn character sketch (iPad + Procreate) and generates consistent multi-angle previs references using Nano Banana Pro and Higgsfield AI. Used in early pre-production for Sawdust and Snow (a stop-motion film) to explore costume, proportion, and silhouette before physical fabrication begins.

ai doll commercial:

Born from code and sea foam

Mermaid Doll Commercial / Character Development

For the mermaid doll commercial, I used Google's generative AI tools alongside Higgsfield AI as a creative playground to design and evolve the character while keeping her visually consistent and instantly recognizable. I approached the mermaid as a product character: cute, collectible, and brand-ready, rather than a one-off AI output.

Using tools like Nano Banana for image generation, Veo for video, and Higgsfield AI for concept iteration and visual development, I built a workflow that allowed me to iterate quickly while maintaining control over the character's identity. I explored variations in expression, styling, and mood, curating results the way a creative team would develop and refine a branded asset.

What I value most is how these tools support fast, idea-driven workflows. They allowed me to move from concept to visuals rapidly, test directions, and then carry the strongest outputs into animation, compositing, and final edits. In this process, AI wasn't the final product, but the tool that made exploration more intentional and controlled.

Concepting with AI

A collection of creative development work where generative AI tools serve as the first pencil, not the final brush. These projects explore how Higgsfield AI, Nano Banana, Google Veo, Runway, and Midjourney can accelerate the concepting phase, compress iteration cycles, and make early-stage creative decisions faster and more visual.

AI here is a thinking tool. The ideas, the direction, and the curation are always human.

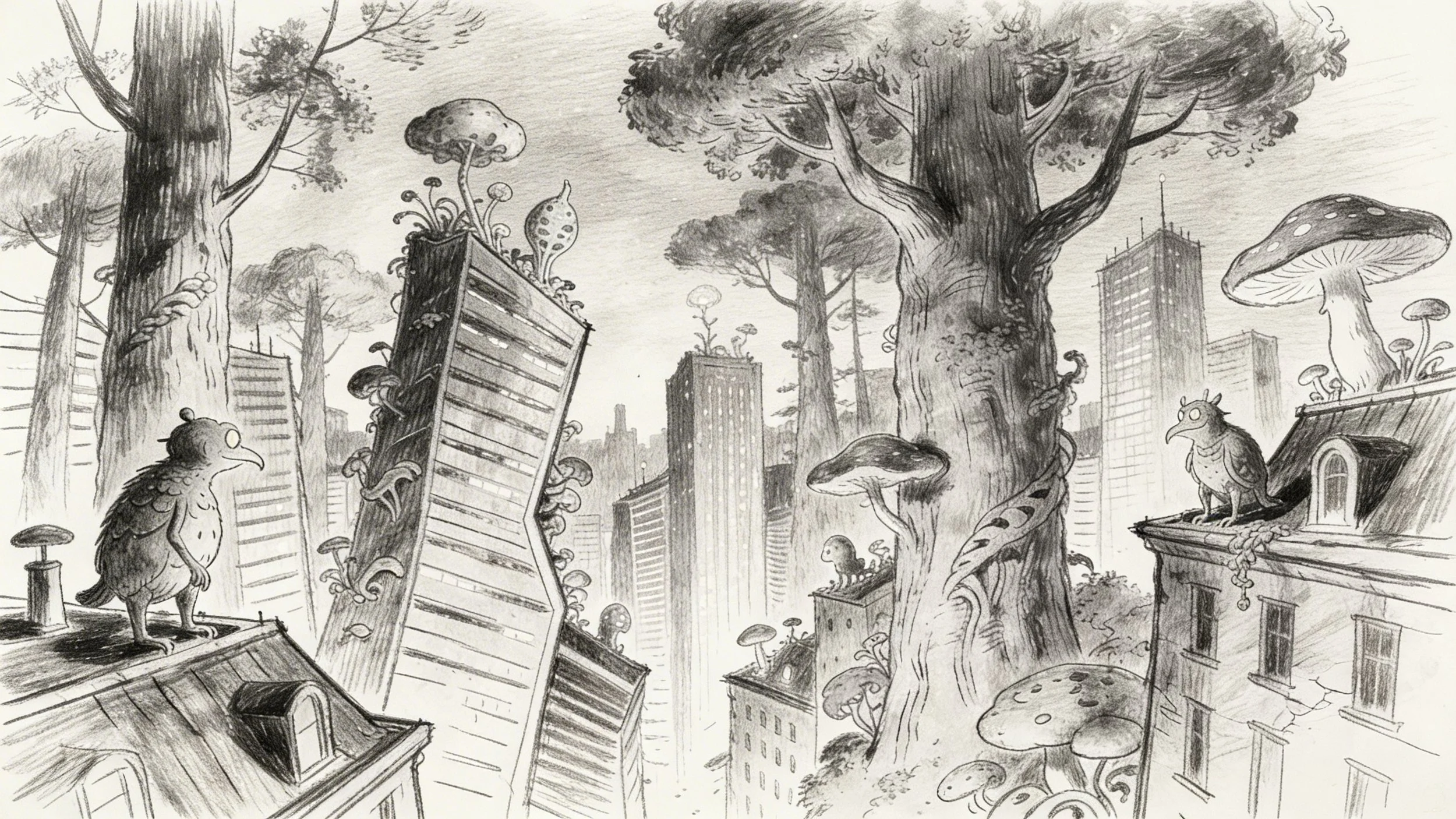

prompt:

“Surreal animated film concept art, strange forest city skyline at night, crooked buildings fused with giant ancient trees and oversized mushrooms, bizarre creatures perched on rooftops and window ledges, strange organic growths erupting from skyscrapers, bioluminescent details, mysterious fog, inspired by Hieronymus Bosch and Studio Ghibli, rough pencil sketch style, hand-drawn storyboard aesthetic, cinematic wide establishing shot, animation pre-production art, high detail, dark atmospheric lighting”